Hosting a Ghost blog on AWS S3 as a static website

Before diving into what this article is all about, I would like to provide a summary about what static website hosting on S3 means and what are its benefits.

What is static website hosting on S3?

Amazon Web Services (AWS) provides a very economical file storage service called Simple Storage Service (S3 in short). S3 is extremely cheap compared disks, is highly available and often used for file storage. Apart from using S3 for simple file storage, AWS also allows you to host your website on S3. So, if you have a static website, hosting it on S3 is a very good option due to the following reasons:

- Cheap: Serving your wesbite from S3 is much much cheaper as compared to serving it from a server. You can handle millions of users with just around $2 a month.

- Scalable: Since there are no servers involved, you don't need to bother about scaling as your traffic increases.

- Reliable: No servers mean no worries of downtime. S3 buckets will be always available

If your website does not include any server side code, there is no reason to host it on a traditional server. So, when I decided to start my own blog using Ghost, I explored the possibility of hosting it on S3 as a static wesbite. And in this article, I will be sharing my experience doing the same and showing you how you can do that with your own blog.

Objective of this article

The purpose of this article is to demonstrate how to setup your own blog using Ghost and then show how to host your blog on AWS S3 as a static website. We'll also cover how you can link your domain from Godaddy with your S3 bucket using Route 53. Here are the steps we will follow:

- Installing Node.js

- Setting up Ghost on your local machine

- Creating content for your blog

- Generating assets for static website from your locally hosted ghost blog using HTTrack

- Setting up AWS CLI

- Creating and configuring S3 buckets for your domain

- Deploying your static website assets (generated by HTTrack) to your S3 bucket

- Pointing your Godaddy domain to your S3 bucket using Route 53

Pre Requisites

- You have an AWS account

- You already have a domain in Godaddy

- You are using a Linux or Unix based system

If you are using some other operating system, you can follow the instructions specific to that OS but the process remains more or less the same. Similarly, if your domain is registered with some other domain registrar other than Godaddy, you will need to follow the instructions for that on how to map your domain to your S3 buckets. You can still follow this article even if you do not have a domain yet. However, your blog links will look something like this:

http://yourdomain.com.s3-website.ap-south-1.amazonaws.com

Instead of this:

http://yourdomain.com

But you can still use S3 to host your blog even without buying a domain of your own. Also, I am using Ghost for my blog because I liked it most due to its simplicity and amazing markdown editor, but you can use the concepts of this tutorial and apply them to your blog even if you are using some other blogging engine.

So, without further ado, let us dive in

Step 1 — Installing Node.js

Head over to the official Node.js website and download and install the Node.js version for your OS. I would recommend going with the LTS version. If you are using Linux, you can also follow the instructions for your distribution of Linux and install the same using package manager here

Once installed, verify that by opening your terminal and typing the following command:

$ node -v

If it displays the version of Node.js, then Node was successfully installed on your machine. If it shows an error message then you probably made some mistake during installation. So, you will need to follow the installation instructions again to make sure Node is installed on your machine.

Step 2 — Setting up Ghost on your local machine

We will download Ghost for developers on our local machine and use it to host our Ghost blog first on our localhost.

By default, the Ghost installation will contain some demo posts. We will then explore the admin panel of Ghost and remove the demo posts and add some new blog posts of our own.

Then we will see how our blog looks on our localhost.

First, let us create a directory on our local machine where we will keep all the files for our blog. Open your terminal and go to your home directory. Then create a new directory called my-awesome-blog. You need to run the following commands for that:

$ cd

$ mkdir my-awesome-blog

$ cd my-awesome-blog

Now we need to download Ghost for developers and copy it to our my-awesome-blog directory. Head over to Ghost developer's page here and download the zip file to your local machine. Copy the zip file to your my-awesome-blog directory and unzip it. Now the contents of your my-awesome-blog directory should look like this:

➜ my-awesome-blog ls -lhrt

total 552

-rw-r--r-- 1 mandeep staff 209K May 29 15:13 yarn.lock

-rw-r--r-- 1 mandeep staff 1.4K May 29 15:13 index.js

-rw-r--r-- 1 mandeep staff 3.9K May 29 15:13 README.md

-rw-r--r-- 1 mandeep staff 3.1K May 29 15:13 PRIVACY.md

-rw-r--r-- 1 mandeep staff 451B May 29 15:13 MigratorConfig.js

-rw-r--r-- 1 mandeep staff 1.0K May 29 15:13 LICENSE

-rw-r--r-- 1 mandeep staff 32K May 29 15:13 Gruntfile.js

-rw-r--r-- 1 mandeep staff 4.1K May 29 15:14 package.json

drwxr-xr-x 5 mandeep staff 170B May 29 15:16 core

drwxr-xr-x 9 mandeep staff 306B May 29 15:16 content

This is the Ghost bundle for developers that you can use to host Ghost blog on your local machine and see how your blog will look like. In order to quickly see how the blog looks by default, run the following command from inside the my-awesome-blog directory:

$ npm install --production

Ghost is built in Node.js. Typically, when you download any Node.js project, you need to install its dependencies. The above npm install command does that. It will install the project dependencies specific to your operating system version. Next we need to initialize the database for our blog. Run the following command in terminal (note that it is npx and not npm):

$ npx knex-migrator init

This will create the ghost-dev.db file in my-awesome-blog/content/data directory. This file contains the database for our blog and initally it contains some demo blog posts by ghost.org

Note: npx is a very cool utility. We don't really need to know anything about it for this tutorial but you can definitely read more about it here https://github.com/zkat/npx

Once we have installed all the dependencies and initialized the database, let's try starting your Ghost server by running the following command:

$ npm start

You should see ouput like this in your terminal:

➜ my-awesome-blog npm start

> ghost@1.23.1 start /Users/mandeep/my-awesome-blog

> node index

[2018-06-02 10:36:16] WARN Theme's file locales/en.json not found.

[2018-06-02 10:36:16] INFO Ghost is running in development...

[2018-06-02 10:36:16] INFO Listening on: 127.0.0.1:2368

[2018-06-02 10:36:16] INFO Url configured as: http://codingfundas.com/

[2018-06-02 10:36:16] INFO Ctrl+C to shut down

[2018-06-02 10:36:16] INFO Ghost boot 1.517s

Please note the third last line in the above output which says that the Url is configured as http://codingfundas.com/

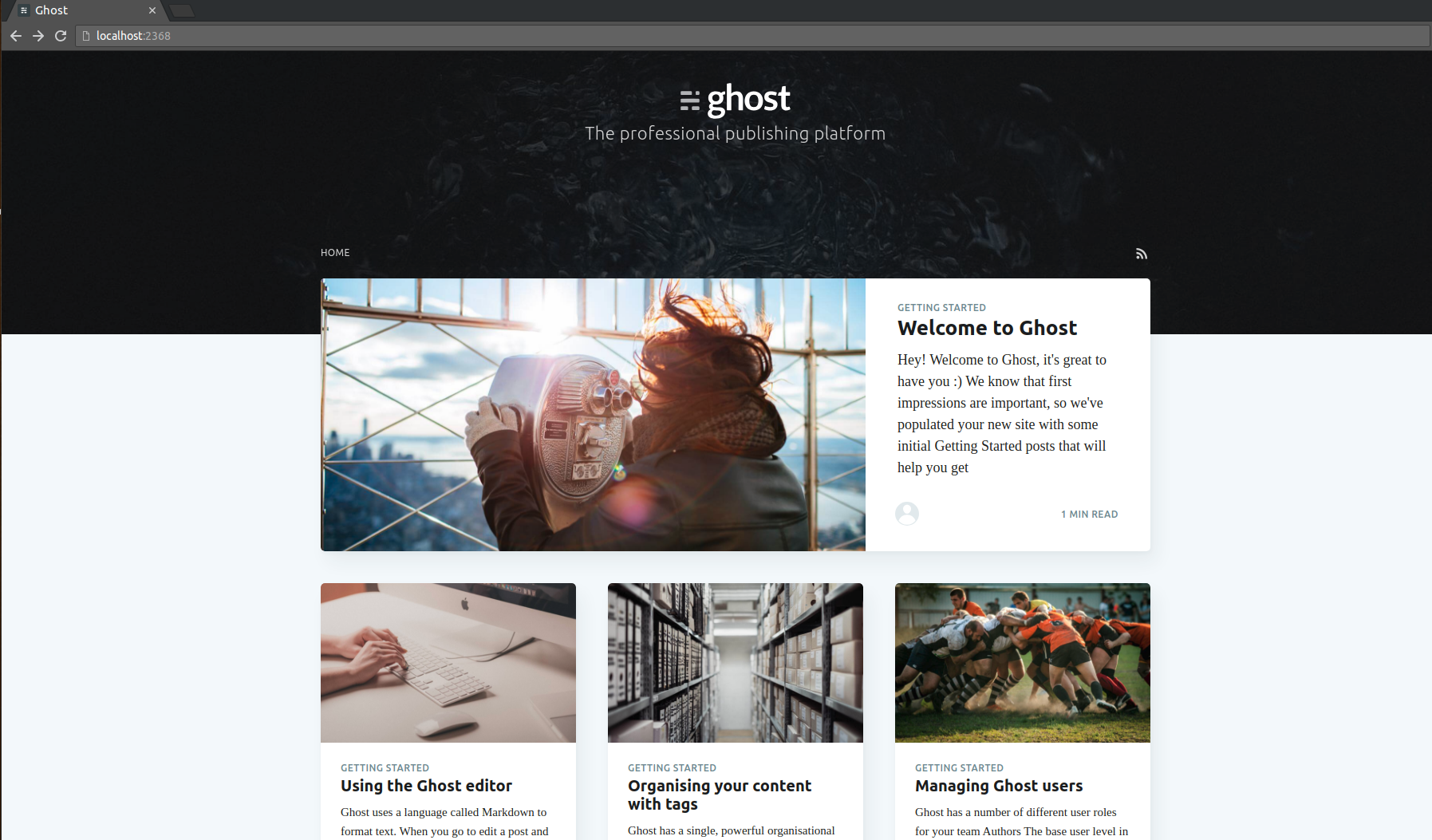

This means that your Ghost server is running on your local machine and serving the blog at the above mentioned address. Leave the server running in your terminal. Open your browser and copy paste that url in the address bar, you should see your blog. It will look something like this:

By default, your blog will contain demo posts by Ghost, each post being a tutorial on how to use various functionalities in Ghost. I would recommend that you go through these tutorials and get familiar with Ghost. In our next step, we will be deleting all of these posts and writing our own new posts using Ghost's admin panel.

Step 3 — Creating content for your blog

As we saw in step 2, our ghost blog contains demo posts by default. Now we will see how to manage posts on our blog by using the Ghost admin panel.

Open your browser and open the url http://codingfundas.com/ghost/

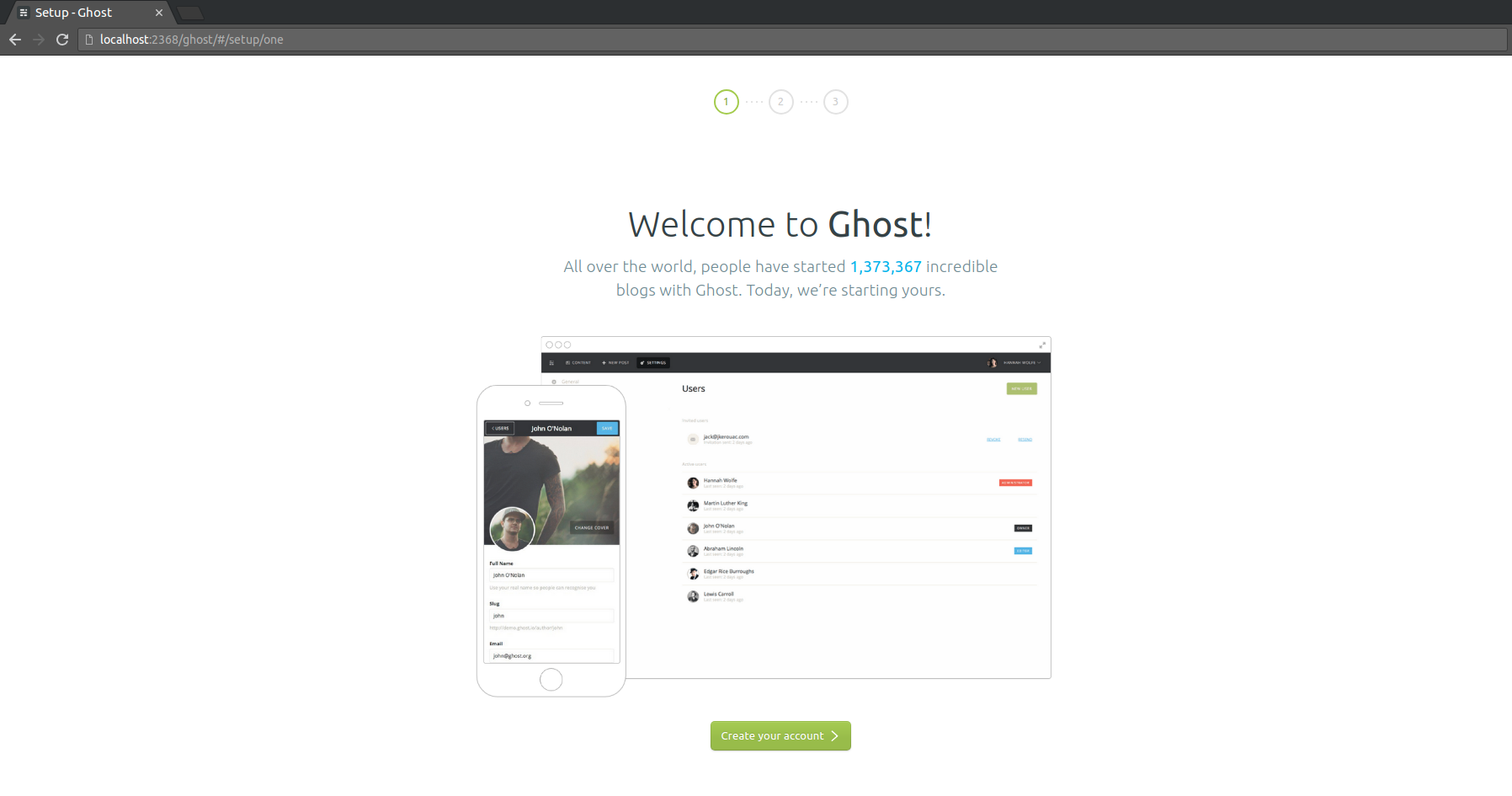

This will open the Ghost admin panel. Since this is the first time we are accessing the admin panel, it will open the wizard for creating a new admin user. Here's how it looks like:

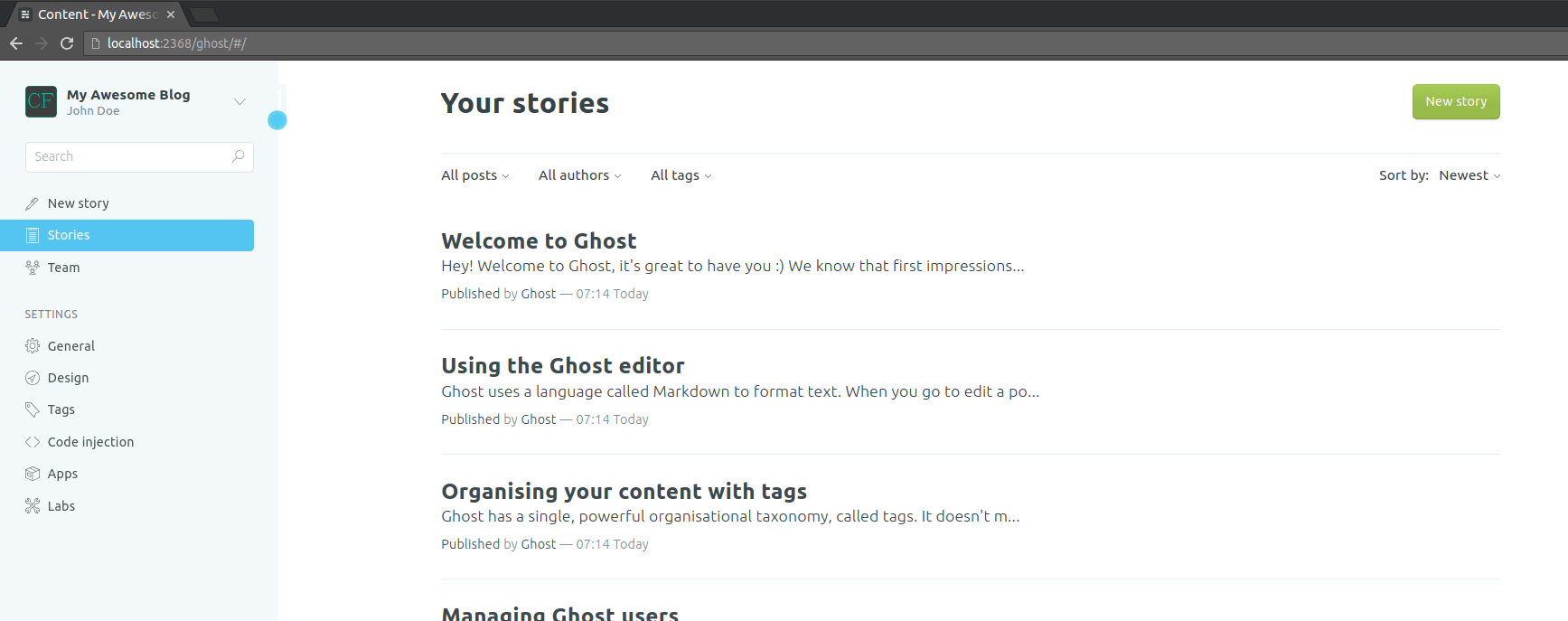

Go ahead and follow the wizard to create a new account and set the password for the same. For now, we do not need to invite anybody so you can skip the last step where it asks you to invite your team. Once you have created the admin account, you will see the admin panel which will look something like this:

You can see all the posts here. In Ghost's terminology, a blog post is called a story. So, in the left side navigation pane, you can see the stories tab and if you click on that you will see all the stories on the right hand side panel. If you click on the title of any of the stories, it will open that story for editing. Feel free to play around with the admin panel. Go edit some stories, save your changes, publish your changes (Update/Publish button at top right corner), play with settings of your story (gear icon on top right corner). Get familiar with the admin panel. Once you are confident with the admin panel, let's go ahead and delete all the existing blog posts and create some new posts.

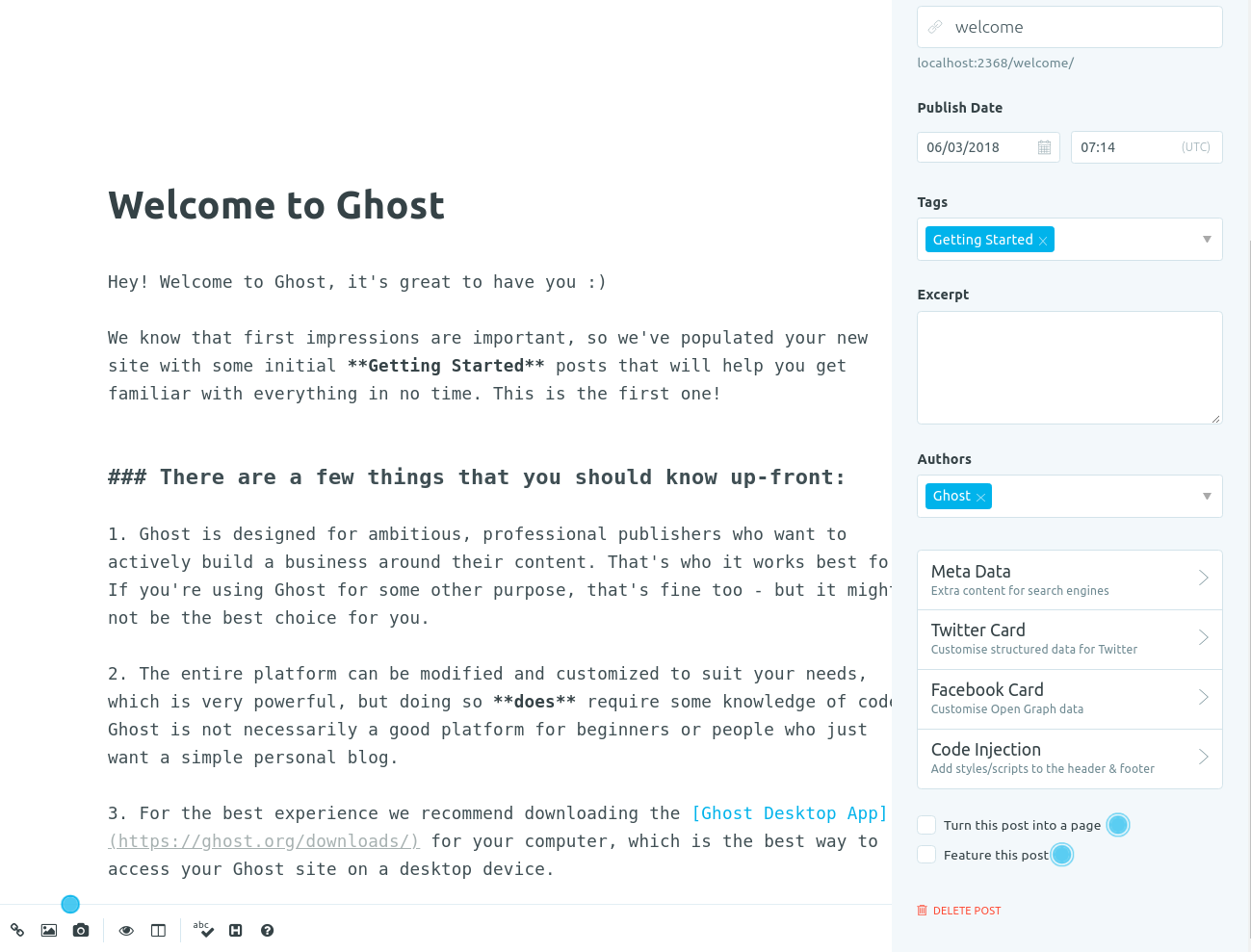

In order to delete the old blog posts, you can open each of the stories, click on the gear icon on the top right corner, scroll to the buttom and click on Delete Post button. Do this for all the posts. Here is a screenshot for reference (look at the bottom right corner):

Once you have deleted all the posts, click on the New story button in the left side navigation panel. This will start a new blog post with markdown editor on the right hand side panel. If you are familiar with Markdown syntax, go ahead and play with the editor. Get familiar with the editor. There are lots of cool stuff you can do in it. Then tweak the settings of the post by clicking the gear icon on the top right corner. Once you have created your blog post, click on Publish button at the top right corner and publish your post. Once published, you can view your blog by clicking on the View site button at the bottom of the left hand side panel. You should be able to see your blog post there.

Great! So now we have our content ready for our awesome blog. This blog is currently being served by the Node.js server that we ran using npm start command. As of now, we cannot host our blog on AWS S3 because it is not a static website. Its content is being served dynamically by the Node.js server. In order to host our blog on S3, we will need to generate the static website corresponding to our blog. This means, we need all the files as static HTML, CSS and JS files that we can upload to S3. That's what we are going to do in our next step using HTTrack

Step 4 — Generating assets for static website from your locally hosted ghost blog using HTTrack

We will be using the concept of website mirroring for generating our static website from our blog. Mirroring a website means we crawl all pages of a website to create a replica of the same on our local machine. While doing this for large complex websites, it can be complicated. But since our blog is a simple site with simple content and it is hosted locally, it is very simple to mirror our blog. There are many tools for mirroring a website. For example, wget, HTTrack, etc. For our tutorial, we will be using HTTrack.

HTTrack is a command line utility for accessing websites and downloading content from web. It is typically used for mirroring websites to create their copy on your local machine. In order to install HTTrack, visit their download page and follow the instructions to install as per your OS. For Ubuntu, you can simply install it by running the following command in terminal:

$ sudo apt-get install webhttrack

Verify the installation by running the following command in your terminal:

$ httrack --version

If installed, it will display the version of the HTTrack installed on your machine. Once you have HTTrack installed, let us use it to mirror our blog which is being served dynamically.

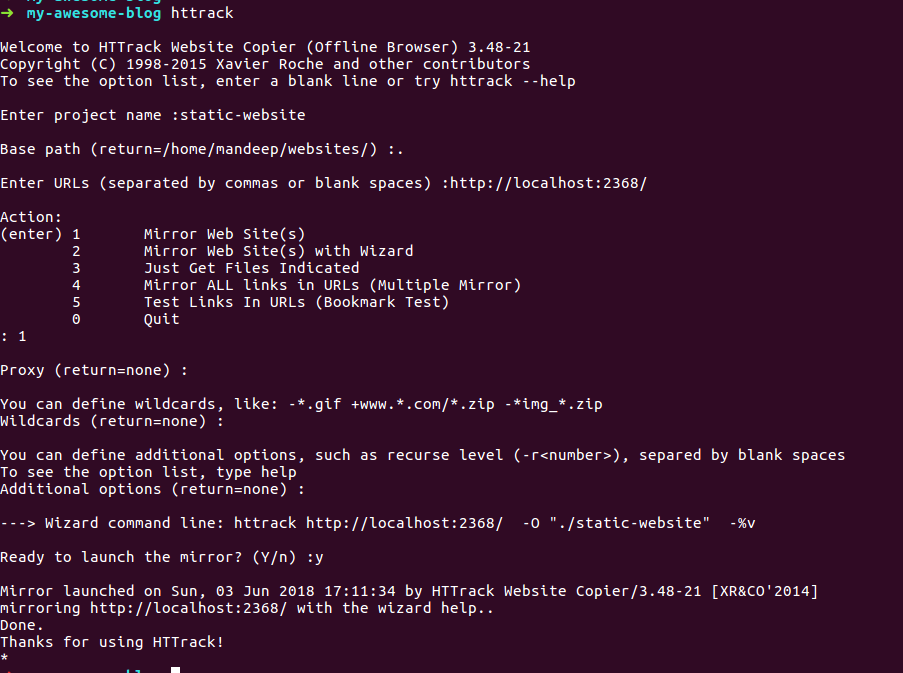

Make sure that your Ghost Node.js server is running in terminal (the npm start command that we executed earlier). Keep it running and open another terminal window or tab. Go to my-awesome-blog directory and from that directory, run the command:

$ httrack

This will start a wizard for mirroring your blog. It will ask you for project name. Enter the name as static-website.

Then it will ask for base path. Enter dot (.) there. This tells that we need to copy the static assets insided the my-awesome-blog/static-website directory.

Then it will ask for URLs. Enter the url of the blog on your localhost, that is http://codingfundas.com/

Then it will ask for options:

Action:

(enter) 1 Mirror Web Site(s)

2 Mirror Web Site(s) with Wizard

3 Just Get Files Indicated

4 Mirror ALL links in URLs (Multiple Mirror)

5 Test Links In URLs (Bookmark Test)

0 Quit

Enter 1 to mirror the website. For the next options, just skip them by pressing ENTER.

Finally it will ask if you are ready to launch the mirror. Type Y and press ENTER.

It will then start the mirror and let you know once completed. Here is the screenshot

This will crawl our entire blog. It will create a directory static-website inside the my-awesome-blog directory. Inside the static-website directory, there will be another directory localhost_2368. Inside the localhost_2368 directory you will find all the assets and index.html for your static website. The directory structure should look similar to this:

- my-awesome-blog

| -static-website

| - localhost_2368

| -

| - favicon.ico

| - public

| - author

| - assets

| - hello

| - index.html

Instead of hello you might see the title of your blog post that you wrote earlier.

Congratulations! You have successfully created the static assets from your Ghost blog. Now you just need to upload these assets to AWS S3 so that the whole world can see your awesome blog.

Note: From this step onwards, you will need an AWS account.

Step 5 — Setting up AWS CLI

AWS CLI is the command line interface which allows you to perform various actions on AWS using your terminal. Once we have created the assets for our static website, we need to upload them to an S3 bucket. While this can be done using the AWS Console on your web browser, copying all the files while maintaining the directory structure will be quite tedious task. Also, everytime we change anything in our blog, we will need to run the httrack command to generate the static website assets again and then sync the updated directory with the S3 bucket (This involves removing deleted files, updating existing files and adding newly added files). Doing this via web browser is not a practical solution. But with the AWS CLI, this can be done with a single command. So, it is quite important for us to configure AWS CLI on our local machine.

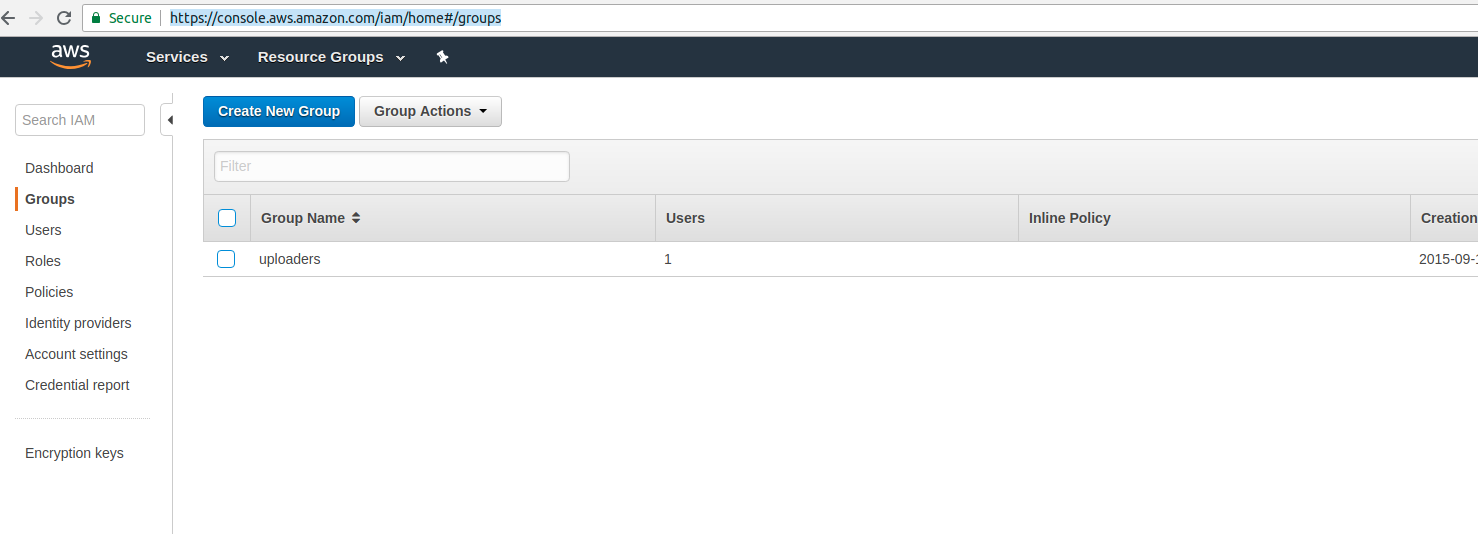

For setting up AWS CLI, we need an IAM user in AWS and the Access Key Id and Secret Access Key for that IAM user. IAM stands for Identity Access Management and it is an AWS service which allows us to create users, groups, roles and control which groups have access to perform which actions, which users belong to which groups, etc. In short, all sorts of access controls that we want to impose on our AWS resources can be controlled via IAM. Discussing all the possibilities of what all can be done with IAM is beyond the scope of this tutorial but we don't really need to know everything for our use case. Here is what we are going to do for our use case:

- Create a group in IAM which has full access to S3. Call this group s3-admins

- Create an IAM user ghost-blogger and assign this user to the group s3-admins

- Copy the generated credentials of our user ghost-blogger and use them to setup AWS CLI on our local machine

First of all, login to your AWS Console from your web browser. Then navigate to the IAM > Groups

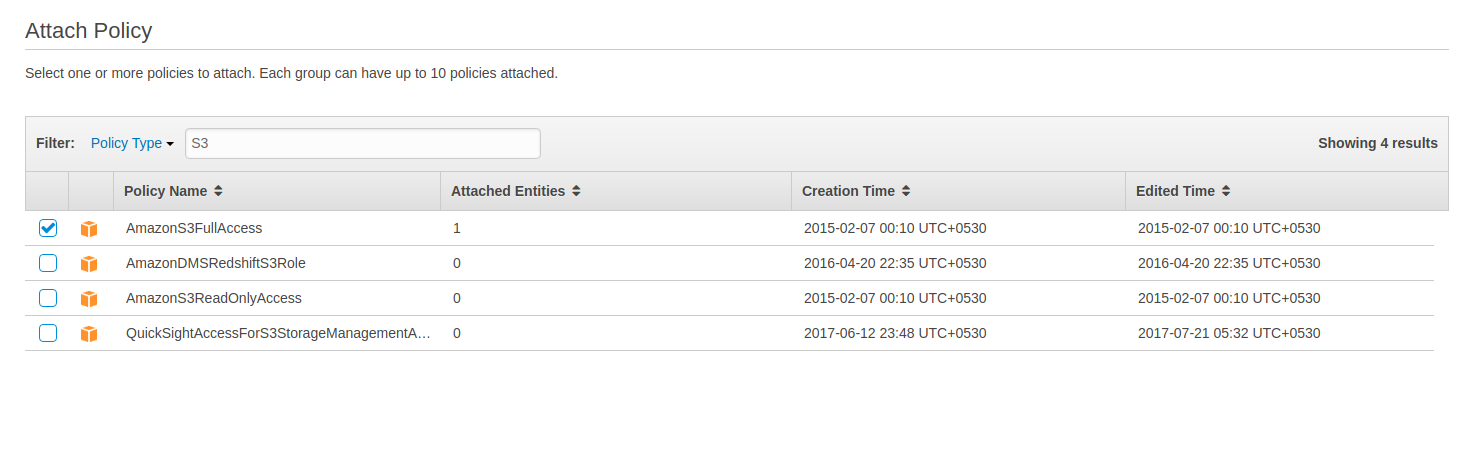

Click on the Create New Group button. Enter the group name s3-admins and click next. In the next page you will see the option to attach a policy. Policies are contracts which govern all the access controls. In the Policy Type box, type: S3. It will automatically filter all the policies associated with S3. We need to select the policy AmazonS3FullAccess. Any entity (user or group) associated with this policy will have full access to AWS S3 for this AWS account. Look at the screenshot below:

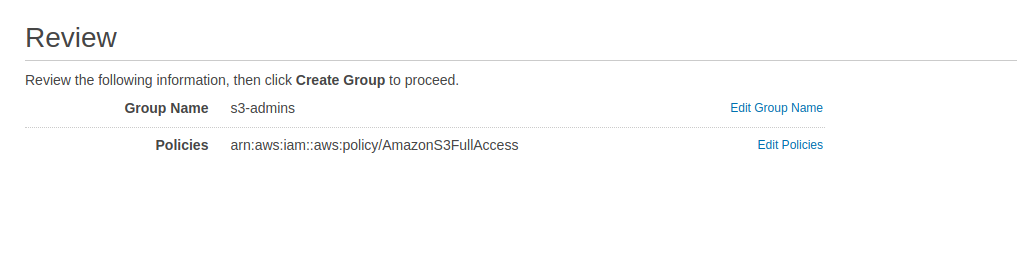

Select the AmazonS3FullAccess policy and click Next Step.

Review the details and click on Create Group button. You should be able to see the newly created group in the group list.

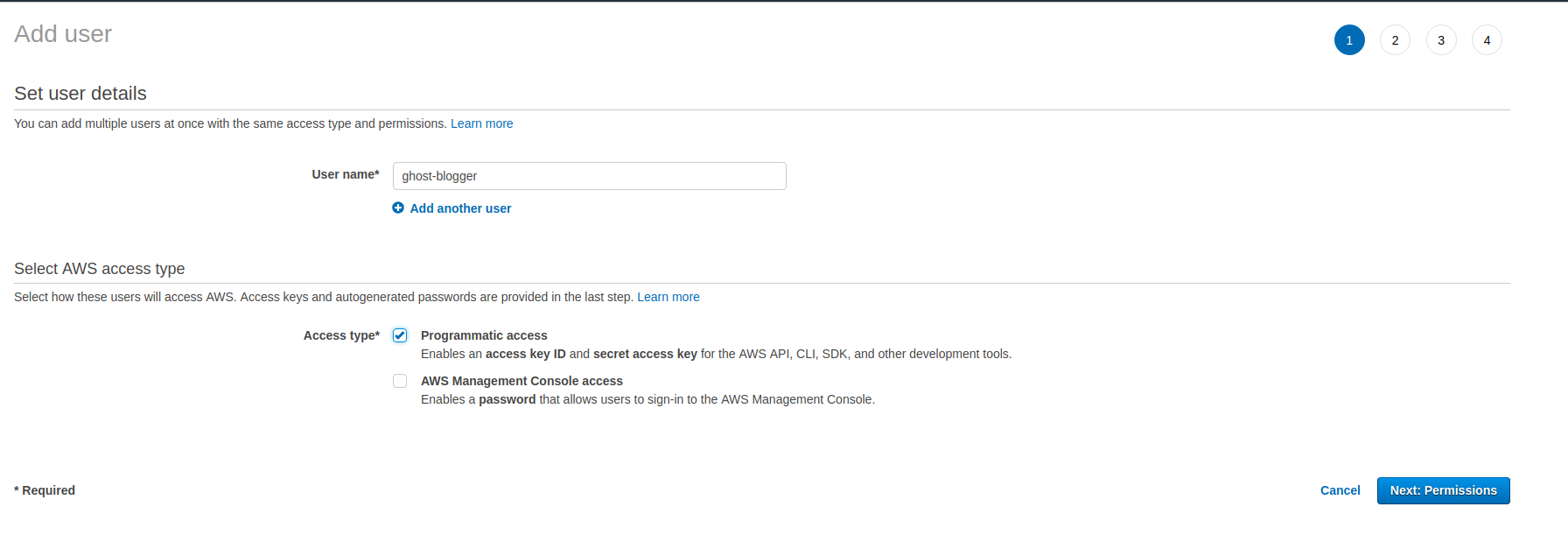

Now, we need to create a user ghost-blogger and link it with this group s3-admins. Go to IAM > Users for this. Click Add User button. This will open up the form for creating a new user. Add the username as ghost-blogger. At the bottom of the form, you will see two checkboxes for selecting which type of access do we want to grant this user. For our case, we only need to select Programmatic access because we will be using this user only for uploading our static assets to S3 bucket from AWS CLI. So, go ahead and select the checkbox Programmatic access and click Next

In the next step, we add this user to the s3-admins group that we had created earlier. Review the information and proceed to create the user.

Very Very Important: Once you create a user, you will be able to see the Access Key ID and Secret Access Key of the user. AWS will also provide an option to download the credentials as a csv file. Do that. Copy the credentials and keep them somewhere safe because AWS will never show the Secret Access Key again. So, make sure that you copy the credentials and keep the somewhere safe.

Did you copy the credentials? Yes? Good. Now let's get back to our local machine. Now we need to configure AWS CLI on our local machine. First, we will need to install AWS CLI on our machine. You can check out the installation instructions here and follow the instructions specific to your operating system. Verify the installation by running the aws --version command from terminal.

Once installed, now we need to configure it to be able to upload our static website assets to s3.

Open the terminal and type the command

$ aws configure

It will prompt you to provide your Access Key ID and Secret Access Key one by one. Paste the credentials that you had copied earlier one by one here. For rest of the questions, you can just press enter. To verify if it is configured correctly, type the following command in terminal:

$ aws s3 ls

This command lists all the buckets in S3 in your AWS account. If you do not have any buckets in your S3, the command will run without any output. If the above command throws no error then it means our awscli is configured correctly. Now we can use it to upload our static website assets to our S3 buckets. But first, we need to create the buckets in our S3 and that is our next step.

Step 6 — Creating and configuring S3 buckets for your domain

Let us say we own a domain called yourdomain.com. We want to serve our website at http://yourdomain.com. And also if any user accesses http://www.yourdomain.com, we want to redirect them to http://yourdomain.com. In order to setup our domains this way, we will need to create two buckets on S3:

- yourdomain.com

- www.yourdomain.com

Please note that you will need to create the buckets as per the domain name you own. Here yourdomain is just a placeholder. For example, in my case I had to create the buckets codingfundas.com and www.codingfundas.com.

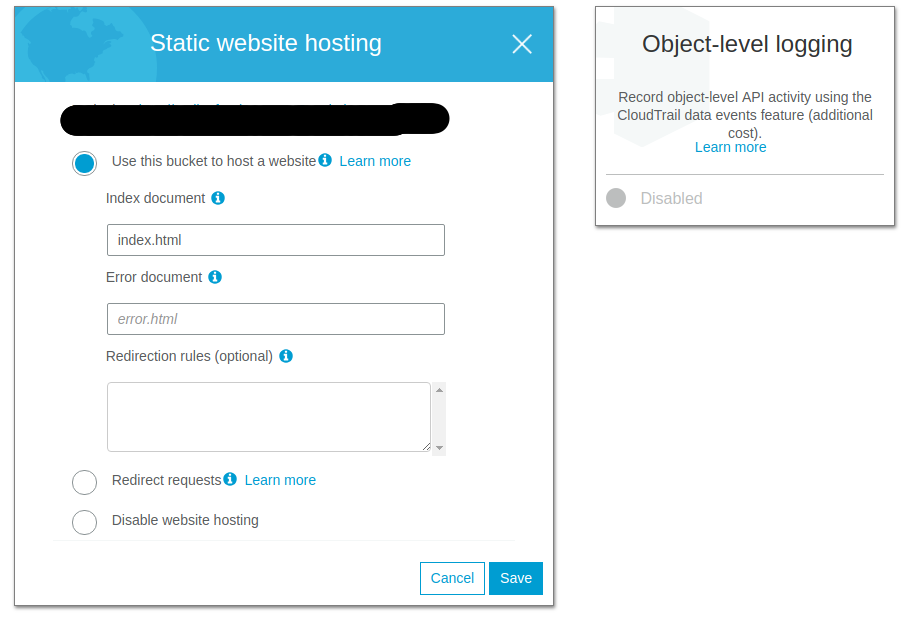

Once you have created the buckets, click on the yourdomain.com bucket. Then click on Properties tab. Click on Static website hosting and then select the box Use this bucket to host a website. In the index document field, fill index.html and click Save.

Note down the url mentioned in the Endpoint (the one masked in the above screenshot). This is the public url of your website and it will look something like this:

http://yourdomain.com.s3-website.ap-south-1.amazonaws.com

Instead of ap-south-1, you might have something else in your case depending on the region you chose for your S3 bucket. Note it down and save it somewhere. We will need it later to access our website and map our domain to S3 bucket.

Then click on Permissions tab and then click on Bucket Policy. Paste the following policy in the box below (replace yourdomain with the actual domain name):

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "PublicReadForGetBucketObjects",

"Effect": "Allow",

"Principal": "*",

"Action": "s3:GetObject",

"Resource": "arn:aws:s3:::yourdomain.com/*"

}

]

}

Click Save. If you entered the policy correctly, it will save. Otherwise it will throw an error. As mentioned earlier, policies in AWS are contracts which dictate the access control for AWS resources. The above policy dictates that our bucket yourdomain.com and all the objects in that bucket can be accessed by anyone on the internet. It only provides read only access to the public so that nobody else (apart from our ghost-blogger user) can write to this bucket. Upon saving the policy, you might get a warning telling you that this bucket has public access. That's ok since we are using this bucket for hosting a website so we need it to have public access. AWS gives that warning because if we were using this bucket for some other purpose instead of hosting a website, then it would be a bad idea to grant public access to this bucket. For example, if we were using the S3 bucket to store the personal details of the users of our application, then it would be a disaster if that bucket had public access. Hence the warning. But we dont need to worry about it since we are using this bucket for hosting a website.

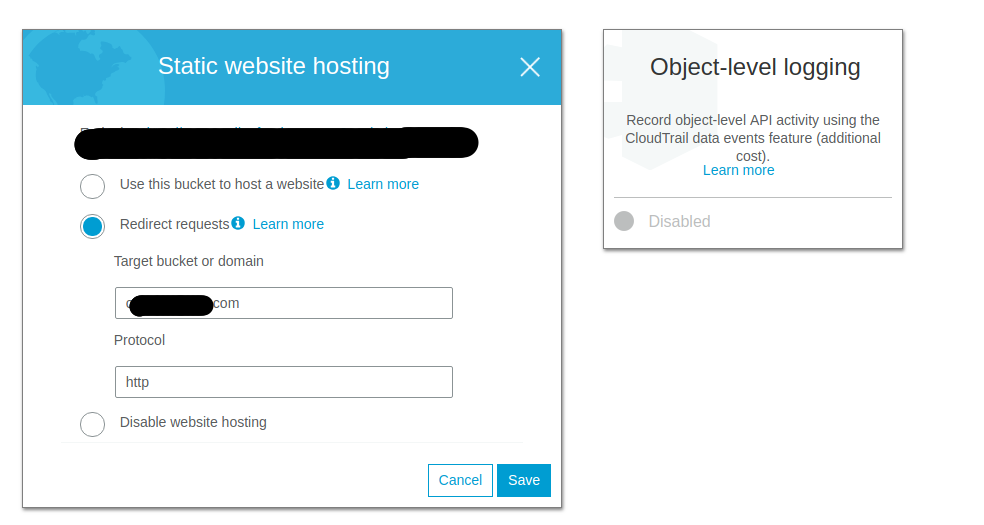

Once we have configured our bucket yourdomain.com, we then need to configure the other bucket www.yourdomain.com to redirect the traffic to yourdomain.com. So, from S3 buckets listing, click on the bucket www.yourdomain.com and then click on Properties tab and then Static website hosting. Check the option Redirect requests and then in the Target bucket or domain field, enter the name of the other bucket, that is yourdomain.com and in the Protocol field, enter http.

Click Save. Note down the url mentioned in the Endpoint (the one masked in the above screenshot). This is the public url of your website and it will look something like this:

http://www.yourdomain.com.s3-website.ap-south-1.amazonaws.com

Instead of ap-south-1, you might have something else in your case depending on the region you chose for your S3 bucket. Note it down and save it somewhere. We will need it later to access our website and map our domain to S3 bucket.

This completes our setup of S3 buckets.

Now we are ready to add the assets of our static website to our S3 bucket. Since we have configured yourdomain.com as our main bucket and www.yourdomain.com as the redirect bucket, we need to upload our assets in the yourdomain.com bucket. And that's what we are going to do in the next step.

Step 7 — Deploying your static website assets (generated by HTTrack) to your S3 bucket

Remember the time we created assets for our static website using HTTrack ? :-)

Good. We need to use those now. Now we need to deploy those static assets to our s3 bucket using the awscli. Open your terminal and go to directory my-awesome-blog. If you followed all the instructions properly, you should have a directory named static-website inside that my-awesome-blog directory and a directory named localhost_2368 inside the static-website directory. In short, the path should be like this

$HOME/my-awesome-blog/static-website/localhost_2368

Make sure that directory exists. Then, from your my-awesome-blog/static-website directory, execute the following command:

$ aws s3 sync localhost_2368 s3://yourdomain.com \

--acl public-read \

--delete

Let us see what this command does.

- aws s3 sync: is a command that synchronizes the source with the target. Here, source is the sub-directory localhost_2368 which contains the assets for our static website. And the target is our S3 bucket yourdomain.com.

- --acl public-read: This grants public read access to all the files being uploaded to the bucket.

- --delete: If any files are present in the S3 bucket which are no longer present in the source directory, then delete them from the S3 bucket.

The command will print the logs to the terminal, showing that it is uploading the files from your local machine to your S3 bucket. Once it completes, go to your AWS console on your browser and check the S3 buckets to see if the newly added assets appear there or not. You might need to refresh the page. Please note that assets will only appear in yourdomain.com bucket and not on the www.yourdomain.com bucket since they were uploaded only on yourdomain.com bucket.

Remember earlier I asked you to copy the Endpoint URLs for your S3 buckets which looked something like these:

http://yourdomain.com.s3-website.ap-south-1.amazonaws.com

http://www.yourdomain.com.s3-website.ap-south-1.amazonaws.com

If you visit any of these urls, you should be able to see your blog there. Go and celebrate! Your awesome blog is now published on the internet. Call your friends and tell them that they can visit your blog at:

http://yourdomain.com.s3-website.ap-south-1.amazonaws.com

Well, chances are that after calling a few friends you'll realize that the name of your blog is quite long and hard to remember, even for yourself. Won't it be nice if you could serve your blog directly from:

http://yourdomain.com

That's quite easy to remember. Indeed! That will be awesome! And that's exactly what we're going to do in our next and final step.

Step 8 — Pointing your Godaddy domain to your S3 bucket using Route 53

AWS Route 53 is a Domain Name System. While a discussion of Domain Name Systems and name resolution is beyond the scope of this tutorial, you need to know that we will need to take following steps to point our domain from Godaddy to our S3 buckets:

- Create a hosted zone in Route 53

- Connect this hosted zone to our S3 buckets by adding record sets

- Copy the Name servers from our hosted zone and update the same in our Godaddy domain's settings

Here I am writing with the assumption that you purchased the domain from Godaddy. However, if you purchased from some other domain registrar, process should be the same.

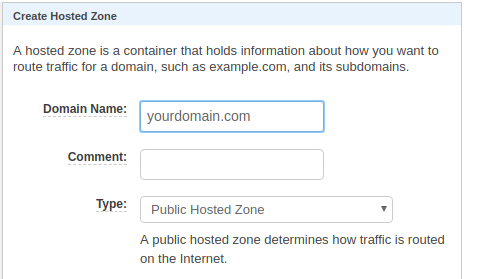

So, first we go to AWS Route 53. Click the button Create Hosted Zone. Enter your domain name in the text box without www prefix. In our example, it will be yourdomain.com.

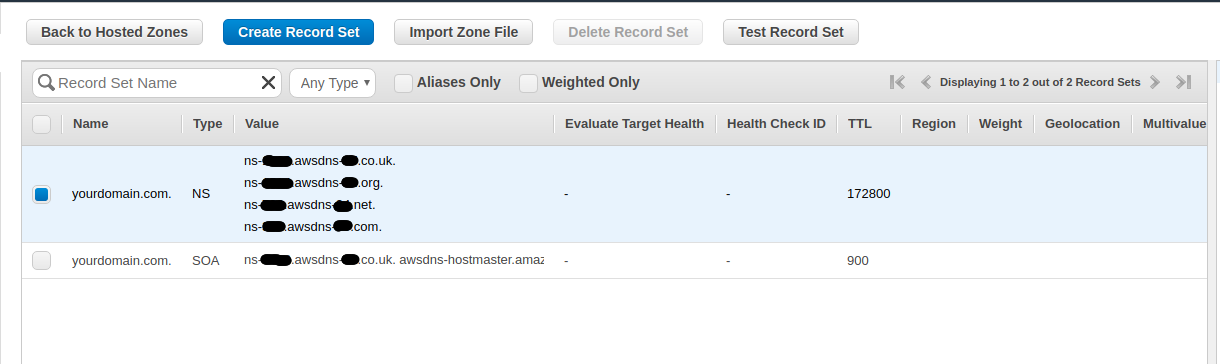

By default it will create two record sets, one with type NS and other with type SOA. Will look like this:

Note that that type NS record will have 4 values for name servers. You need to copy all 4 of these and save them somewhere. We will need these while editing the domain settings in Godaddy.

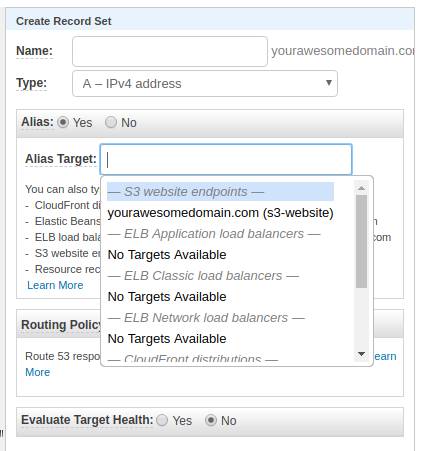

Ok we have created a hosted zone. Now we need to link our s3 buckets with this hosted zone. For that, click on Create Record Set button.

Leave the Name as blank. It will be yourdomain.com by default.

Then leave the Type as A - IPv4 address.

For Alias, select Yes.

Then click in the Alias Target textbox. You should automatically see the name of your S3 bucket yourdomain.com. Select it.

Leave the Routing Policy as Simple and

Evaluate Target Health as No

The screenshot below contains the name yourawesomedomain instead of yourdomain as the S3 bucket by that name was not available. So for demo purposes, I created another hosted zone by the name of yourawesomedomain.com

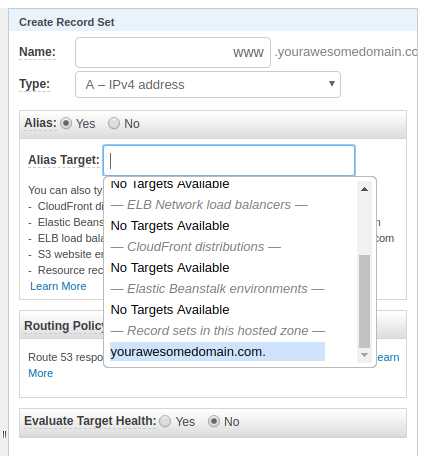

Finally click on Create button at the bottom. This will link our bucket yourdomain.com to our hosted zone. We need to also link our second bucket www.yourdomain.com. For that, again click on Create Record Set button.

This time, in the Name field, enter www

Then leave the Type as A - IPv4 address.

For Alias, select Yes.

Then click in the Alias Target textbox. This time, scroll to the bottom till you see the section Record sets in this hosted zone and under that you should see the entry for yourdomain.com. You need to select that.

Leave the Routing Policy as Simple and

Evaluate Target Health as No

Finally click on Create button at the bottom.

Now our hosted zone should have 4 record sets. These include two Type A records, one Type SOA record and one Type NS record. This completes our config at AWS Route 53. Now we need to go to godaddy and change the settings there to point our domain to the S3 buckets. The Type NS record in Route 53 has 4 name servers in its value field. Earlier I asked you to copy them. Now we will need those.

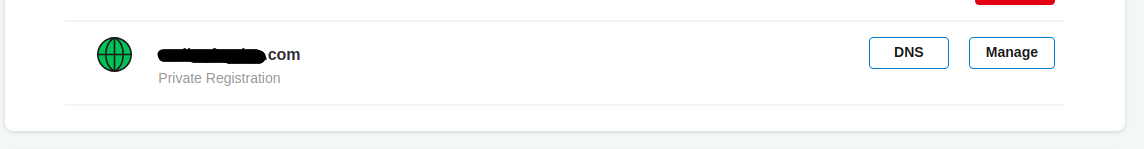

Login to your Godaddy account and navigate to the products page You should see all your domains over there. Look for the domain that you need to point to the S3 bucket and click on the DNS button beside it.

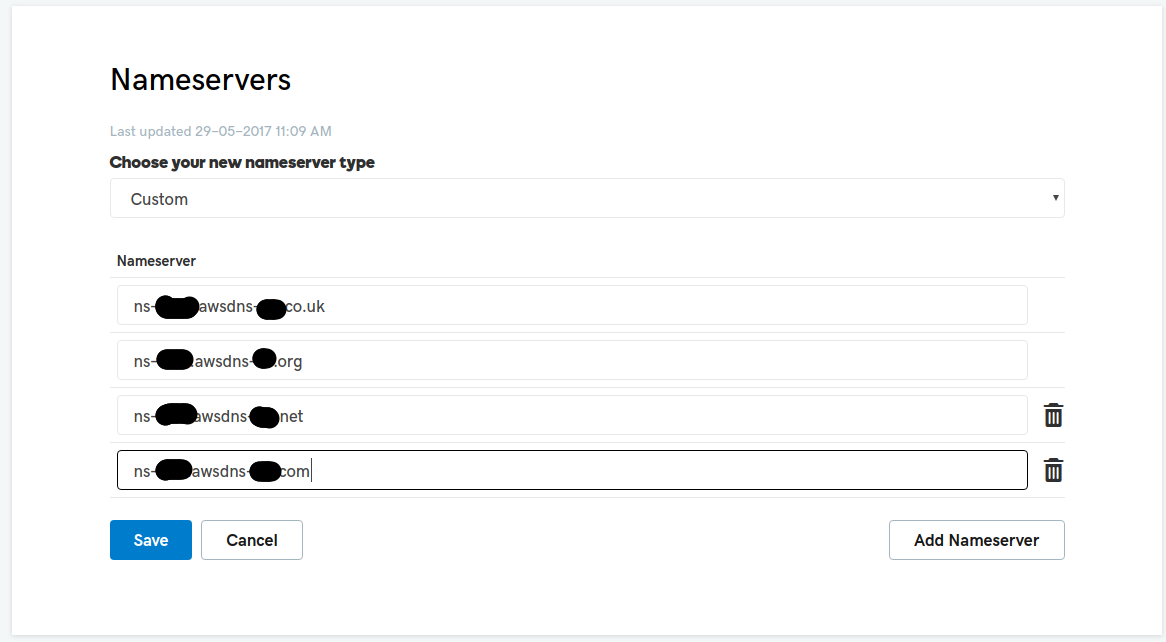

That will open a page with settings for your domain. Scroll down to the Nameservers section and click the Change button. Select Custom from dropdown. Then one by one, enter the 4 name servers url copied earlier from Route 53.

Click Save

That's it! This completes our config at Godaddy as well. This might take a few minutes to reflect. Afterwards you should be able to visit your blog from http://yourdomain.com and http://www.yourdomain.com.

Conclusion

Congratulations! You have successfully understood how to use Ghost as a blogging engine for creating your own blog and also how to significantly reduce the cost of hosting your blog by hosting it on AWS S3 as a static website. Whenever you make any changes to your blog, you need to run Step 4 and Step 7 to make it live on your domain. It hardly takes a minute. This is how I hosted my blog and at the time of writing this tutorial, my blog is one week old. I am still exploring Ghost to see what all cool stuff I can do with it. I hope this tutorial was not just a step by step walkthrough and helped you understand all the concepts involved.

If you liked this tutorial and it helped you, please share it on social media and help others by using the social media sharing buttons at the bottom. If you have any feedback, please feel free to mention those in the comments section below.

Happy Coding :-)